AI is no longer experimental — it's embedded in daily business operations. Large language models now power customer support, internal copilots, analytics workflows, and decision systems. These systems don't just process structured data; they interact with sensitive, unstructured information in real time, including customer conversations, internal documents, and financial data.

However, while adoption has accelerated, control has not kept pace. Most organizations still rely on traditional security and compliance systems that were not designed for AI. These systems monitor activity after it occurs — they log events and flag issues, but they do not intercept or control AI behavior in real time, allowing risk to accumulate.

The dangers are not imaginary:

- Sensitive data moves beyond your perimeter without any restrictions

- AI outputs are untraceable, unexplainable, and unauditable

- Compliance is reactive — violations are discovered at audit, not prevented at runtime

This creates a fundamental gap between how AI systems operate and how organizations try to manage them. AI drives decisions and data exchange in real time. Governance, by contrast, is usually delayed, fragmented, or manual.

That is not sustainable at enterprise scale.

Control cannot be an afterthought. It cannot depend on logs and post-event reviews. It must be embedded into the execution layer of AI systems — where every request, every response, and every data exchange can be evaluated before it happens.

Control needs to happen before execution, not after.

And this is exactly where the concept of an AI gateway comes in.

What Is an AI Gateway?

An AI gateway is the central control point that sits between your applications and the AI models they rely on. Instead of allowing systems to communicate directly with external LLM providers, every request is routed through this centralized layer — where it can be evaluated, modified, or blocked before anything happens.

At its core, an AI gateway introduces control at the exact point where AI is used. When a request is generated, it is not sent directly to the model. It is intercepted, evaluated against policies, and only then allowed to proceed. Requests containing sensitive data or policy violations can be modified or blocked outright. The same applies to responses — ensuring outputs remain aligned with organizational standards.

This transforms how enterprises use AI. Instead of disconnected, unsupervised integrations, organizations gain a single, controlled entry point. All interactions are monitored and governed in real time, bringing structure and consistency to what would otherwise be fragmented usage.

In practical terms, an AI gateway turns AI from a set of scattered integrations into a controlled, enforceable system.

AI Gateway vs. API Gateway — What's the Difference?

If you're familiar with API gateways, you might wonder: isn't this the same thing?

Not quite.

An API gateway manages service-to-service traffic. It handles routing, rate limiting, authentication, and load balancing. It's built for structured, predictable request patterns between known services.

An AI gateway goes further. It sits in the same position — between your applications and external providers — but it understands AI-specific risks and enforces governance at runtime:

| Capability | API Gateway | AI Gateway |

|---|---|---|

| Request routing | Yes | Yes |

| Rate limiting | Yes | Yes |

| Policy enforcement at runtime | No | Yes |

| PII detection and redaction | No | Yes |

| Prompt/response inspection | No | Real-time |

| Compliance audit trails | No | Automatic |

| Data sovereignty controls | No | Enforced |

| Model provider abstraction | No | Yes |

The core difference: AI interactions involve unstructured, natural language inputs that can contain anything — customer PII, proprietary information, regulated data. An API gateway has no concept of this. An AI gateway is purpose-built to intercept, inspect, and enforce policy on these interactions before they reach external model providers.

Why Enterprises Need an AI Gateway

Without a centralized gateway, AI usage inside an enterprise grows in an unstructured way. Different teams adopt different providers, integrations are built independently, and over time, there is no clear visibility into how AI is being used or how much it is costing. This lack of coordination leads to duplicated efforts, uncontrolled spend, and an inability to enforce consistent rules.

An AI gateway addresses this by introducing a unified layer through which all AI interactions are managed.

Data Sovereignty and Protection

Every AI request that leaves your perimeter carries risk. Customer data, internal documents, and proprietary information can be transmitted to third-party model providers without restriction — unless a control layer intercepts it first.

An AI gateway enables organizations to:

- Detect and redact PII before requests reach external models

- Enforce data residency requirements by routing to approved providers

- Prevent sensitive data exfiltration through real-time content inspection

- Maintain full control over what information leaves your environment

Compliance and Regulatory Alignment

Frameworks like the EU AI Act, GDPR, and ISO 42001 are not abstract guidelines — they impose concrete obligations on how AI systems handle data, make decisions, and maintain audit trails.

An AI gateway provides the operational layer where compliance is enforced, not just documented:

- Real-time policy enforcement on every interaction

- Comprehensive audit trails that satisfy regulatory requirements

- Consistent governance across all teams and use cases

- Automated compliance reporting instead of manual documentation

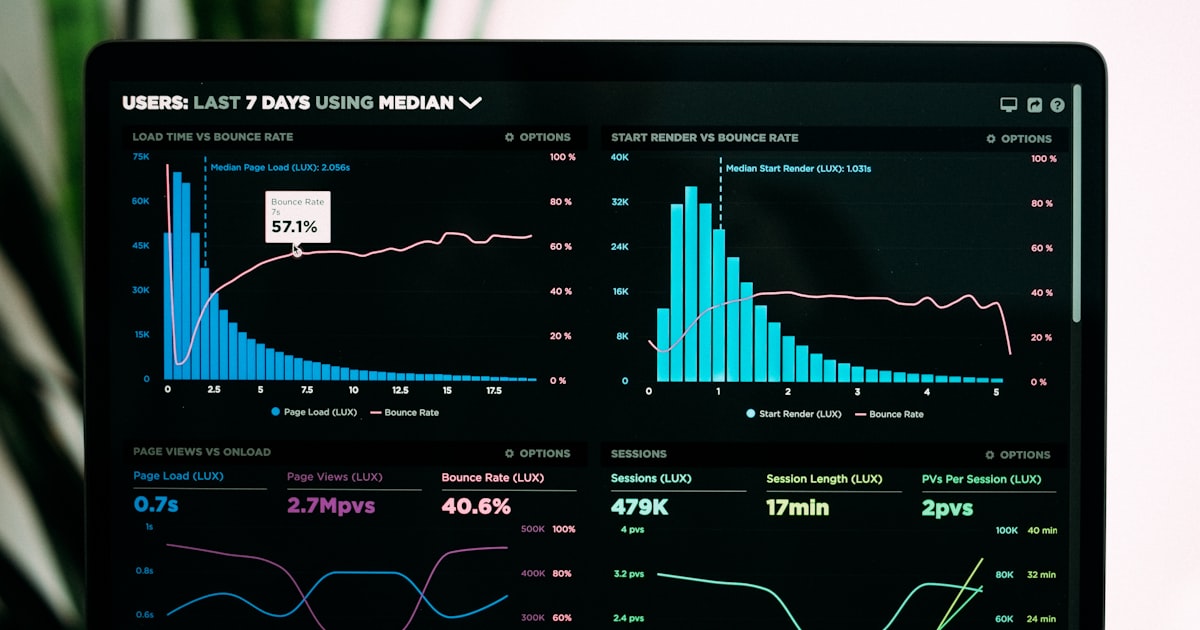

Cost Visibility and Optimization

With centralized tracking, organizations can monitor usage across every team, provider, and model. This enables:

- Token-level cost attribution by team, project, or use case

- Budget controls and spend limits enforced at runtime

- Provider comparison based on actual performance and cost data

- Elimination of duplicate or wasteful API calls

Operational Stability

By regulating request flow through a single layer, organizations prevent overload, ensure consistent performance, and gain the ability to:

- Load balance across multiple providers

- Failover automatically when a provider is unavailable

- Apply rate limits per team or application

- Cache repeated requests to reduce latency and cost

Key Features of an Enterprise AI Gateway

Not all AI gateways are created equal. At the enterprise level, the following capabilities are non-negotiable:

1. Intelligent Request Routing

Route requests to different model providers based on cost, performance, data sensitivity, or compliance requirements. A request containing EU citizen data might be routed to a European-hosted model, while a low-sensitivity internal query goes to the cheapest available provider.

2. PII Detection and Redaction

Automatically scan every prompt for personally identifiable information — names, email addresses, social security numbers, financial data — and redact or mask it before the request reaches the model provider. This happens in real time, with zero manual intervention.

3. Policy Enforcement Engine

Define granular policies that govern what AI can and cannot do. Block specific prompt patterns. Restrict access to certain models. Enforce response filtering. These policies execute at runtime on every single interaction.

4. Complete Audit Trails

Log every request, response, policy decision, and data transformation. These logs must be structured, searchable, and detailed enough to satisfy regulatory auditors. This is not optional — it's required under the EU AI Act and ISO 42001.

5. Provider Abstraction

Decouple your applications from specific model providers. Switch between OpenAI, Anthropic, Gemini, DeepSeek, Mistral, or self-hosted models without changing a single line of application code. This reduces vendor lock-in and enables rapid adaptation.

6. Access Control and Authentication

Centralize API key management and implement fine-grained access controls. Determine which teams, applications, or users can access which models, with what data, and under what conditions.

AI Gateway Architecture: How It Intercepts Every Request

Understanding how an AI gateway works at a technical level clarifies why it is essential for enterprise governance.

How an AI gateway sits between enterprise applications and LLM providers, intercepting and governing every request.

Step 1: Interception. When an application generates an AI request, it does not go directly to the model provider. The gateway intercepts it.

Step 2: Policy evaluation. The request is analyzed against predefined policies — data handling rules, access permissions, compliance requirements, and content restrictions.

Step 3: PII detection and redaction. Sensitive data within the prompt is identified and handled according to policy — redacted, masked, or flagged for review.

Step 4: Intelligent routing. Based on policy outcomes, cost rules, and performance requirements, the request is routed to the appropriate model provider.

Step 5: Response inspection. The model's response passes back through the gateway, where it is evaluated for compliance, toxicity, hallucination indicators, or policy violations.

Step 6: Complete logging. The entire interaction — input, output, policy decisions, data transformations, routing choices — is logged with full context for audit and analysis.

This lifecycle ensures that no AI interaction operates outside of organizational control.

The Risk Most Enterprises Underestimate: Shadow AI

One of the biggest threats to enterprise AI governance isn't your formal systems — it's shadow AI. This includes employees using tools like ChatGPT independently, teams integrating external APIs without approval, or departments building AI workflows without IT oversight.

While often dismissed as harmless, shadow AI creates unregulated activity where sensitive data — internal documents, customer information, proprietary insights — can be exposed without any control or visibility.

You cannot govern what you cannot see.

Without a centralized gateway that captures all AI interactions, compliance cannot be enforced, data exposure cannot be tracked, and audit trails cannot be maintained. Shadow AI is not a theoretical risk — it is happening right now in most enterprises.

An AI gateway addresses this by becoming the single, mandatory path through which all AI interactions must flow — making shadow usage visible and governable.

Evaluating AI Gateway Solutions: What to Look For

When assessing AI gateway solutions for your enterprise, focus on these criteria:

Must-Have Capabilities

- Runtime policy enforcement — policies must execute on live traffic, not just in testing

- PII detection accuracy — false negatives on sensitive data are not acceptable

- Multi-provider support — avoid solutions that lock you into a single LLM provider

- Comprehensive logging — logs must support regulatory audit requirements

- Low latency — the gateway should not meaningfully impact response times

- Scalability — must handle enterprise-volume request loads without degradation

Questions to Ask Vendors

- Does the gateway enforce policies at runtime, or only log violations?

- Can policies be updated without redeploying the gateway?

- What PII categories are detected, and what is the false negative rate?

- How are audit logs structured, and do they satisfy EU AI Act requirements?

- What is the added latency per request?

- Can the gateway be deployed on-premises or in a private cloud for data sovereignty?

Red Flags

- Solutions that only log but don't enforce

- Vendor lock-in to specific model providers

- Lack of data residency options

- No structured audit trail capability

- Inability to handle unstructured natural language inputs

How Difinity Flow Works as a Runtime AI Gateway

Difinity is an enterprise AI governance platform built around two integrated components: Difinity Hub, where administrators define policies, manage use cases, and configure compliance rules — and Difinity Flow, the runtime API layer that enforces those policies on every AI interaction.

Flow acts as the AI gateway. It sits between your applications and model providers (OpenAI, Anthropic, Gemini, DeepSeek, Grok, Mistral), intercepting every request and enforcing the governance rules defined in Hub. Rather than separating traffic management from policy enforcement, Difinity embeds governance directly into the request lifecycle:

- Every request is intercepted and evaluated against the policies configured in Difinity Hub before reaching any external model

- Sensitive data is automatically detected and handled — PII is redacted before requests leave your perimeter, then securely restored in responses so your applications work seamlessly

- Intelligent routing uses machine learning to select the optimal model based on cost, performance, and compliance requirements — or you can define manual routing rules

- Policies are applied consistently across all teams, applications, and use cases — control is not dependent on individual teams following procedures

- Every interaction is logged in full context — creating a complete, auditable record that satisfies EU AI Act, ISO 42001, and GDPR requirements

- Content evaluation checks for toxic content, harmful material, bias, and policy violations — both in prompts and responses

- Provider-compatible APIs allow migration with minimal code changes — use existing OpenAI or Anthropic SDK code, just point it at Difinity Flow

The result is an AI control system where governance is not an afterthought — it is a core part of how AI operates.

See how Difinity enforces governance at runtime → Book a Demo

FAQ

An AI gateway is a control layer placed between your applications and AI models, so that every request is verified, managed, and traced before processing. It provides a single point through which all AI interactions are governed.

An API gateway manages general service traffic — routing, rate limiting, and authentication. An AI gateway extends beyond this by addressing AI-specific risks: inspecting unstructured natural language inputs, enforcing policies at runtime, detecting PII, and maintaining compliance-grade audit trails.

Modern AI gateways are designed for low-latency operation. They often improve overall efficiency by optimizing routing, caching repeated requests, and centralizing control — rather than adding meaningful overhead.

It significantly reduces the risk by identifying and handling sensitive data before it is transmitted to external models. While no system eliminates all risk, runtime controls provided by an AI gateway make unintended data exposure far less probable.

Responsibility is typically shared. Security and compliance teams define policies and requirements, while engineering teams handle integration and deployment. The gateway is the layer where these responsibilities converge into enforceable action.

An LLM gateway is another term for an AI gateway, specifically emphasizing its role in governing interactions with large language models. The terms are used interchangeably in enterprise contexts.

The EU AI Act requires real-time policy enforcement, comprehensive audit trails, and human oversight mechanisms. An AI gateway provides the technical foundation for all of these by intercepting and governing every AI interaction at runtime.

The Bottom Line

AI systems can only be trustworthy when they are controlled before they operate — not after. An AI gateway, when combined with governance enforcement, transforms AI from uncontrolled tooling into a controlled, compliant, and scalable enterprise system.

The organizations that implement this control layer now will lead in both innovation and compliance. Those that delay will face mounting regulatory risk, operational blind spots, and governance debt that becomes exponentially harder to address.

The question is not whether your enterprise needs an AI gateway. The question is whether you can afford to operate without one.

Ready to see runtime AI governance in action? Book a demo of Difinity or explore our pricing plans.