AI regulation is no longer a future concern. It is now an operational requirement.

The EU AI Act represents the most comprehensive legal framework for artificial intelligence to date. It does not simply recommend best practices — it enforces them. For enterprises, this creates a structural shift in how AI systems must be designed, deployed, and governed.

The key change is this: AI systems must now be controlled at runtime, not just documented in policy.

This distinction matters. Documentation can demonstrate intent, but enforcement proves compliance. Regulators are no longer asking whether organizations plan to manage AI risk — they are asking whether those controls are actively applied every time an AI system is used.

For enterprises operating across regions, especially those interacting with European users or data, the EU AI Act introduces a new baseline. Compliance is no longer optional, and it cannot be achieved through fragmented tools or manual oversight.

What Is the EU AI Act?

The EU AI Act is a legally binding regulation that governs how artificial intelligence systems are developed, deployed, and used within the European Union. Its scope is deliberately broad — extending beyond EU-based companies to include any organization whose AI systems interact with EU users or process EU-related data.

This means a company operating from outside Europe is still fully subject to the regulation if its AI systems are accessible within the EU market. In practice, this makes the EU AI Act one of the most globally impactful AI regulations currently in force.

At its core, the Act introduces a risk-based governance model. Instead of applying uniform rules to all AI systems, it categorizes them based on their potential impact on individuals and society. The higher the risk, the stricter the compliance requirements.

What makes this regulation fundamentally different is its emphasis on operational accountability. Organizations must not only define how their AI systems behave but also demonstrate that those behaviors are enforced consistently.

Timeline and Enforcement Reality

Although the EU AI Act has been formally adopted, its enforcement is being phased in to give organizations time to adapt. However, this phased approach should not be mistaken for flexibility.

Key milestones include:

- Early obligations already in effect — prohibited AI practices are banned immediately

- Increasing regulatory oversight through 2025 — transparency requirements and codes of practice

- Full enforcement for high-risk systems beginning in 2026 — comprehensive compliance obligations active

What is often underestimated is the implementation lead time required for compliance. Building governance infrastructure, integrating enforcement layers, and ensuring audit readiness can take months, if not longer.

Delaying preparation is not a neutral decision — it increases compliance risk. Organizations that wait may enter enforcement without the systems required to comply, making remediation significantly more complex and costly.

Why This Matters Now

The EU AI Act introduces a shift from reactive compliance to proactive enforcement.

Historically, many organizations have approached compliance through:

- Policy documentation

- Periodic audits

- Manual review processes

These approaches are no longer sufficient. The EU AI Act requires:

- Continuous monitoring

- Real-time policy enforcement

- Immediate risk mitigation

In practical terms, this means compliance must be embedded directly into the execution layer of AI systems. Every request, every response, and every data exchange must operate within defined and enforceable boundaries.

Risk Classification: How the EU AI Act Categorizes AI Systems

The regulation's risk-based framework determines how AI systems are governed. This classification is central to compliance because it directly defines the level of control required.

Unacceptable Risk: Prohibited Systems

Certain AI applications are considered inherently harmful and are banned outright. These include systems that:

- Manipulate behavior in ways that undermine individual autonomy

- Enable large-scale surveillance practices that violate fundamental rights

- Perform social scoring by governments

- Use real-time biometric identification in public spaces (with narrow exceptions)

There is no compliance pathway for these systems. If an AI application falls into this category, it cannot be deployed or used under any circumstances within the EU.

Even if such outcomes are not intentional, organizations must have controls in place to ensure these use cases are never possible in practice.

High-Risk Systems: Strict Regulatory Control

This is the most critical category for enterprises.

High-risk systems are those that can significantly impact individuals' lives, safety, or rights. Common examples include AI used in:

- Healthcare — diagnostics and treatment recommendations

- Finance — credit scoring, insurance pricing, fraud detection

- Employment — hiring, promotion, or employee evaluation processes

- Education — student assessment and admissions decisions

- Critical infrastructure — energy, transport, water management

- Law enforcement — risk assessment, evidence evaluation

These systems are allowed, but only if they meet strict requirements. Enterprises must implement controls that ensure:

- Risks are continuously identified and mitigated

- Decisions can be explained and audited

- Human oversight is always available

- System behavior remains predictable and controlled

The operational burden is substantial. Compliance requires not just governance frameworks but technical systems that enforce those frameworks in real time.

Limited and Minimal Risk Systems

Lower-risk systems face fewer obligations, but they are not exempt. Transparency requirements still apply, particularly where users may not be aware they are interacting with AI.

An important nuance: risk classification depends on context. A system that appears low-risk in isolation can become high-risk depending on how it is used. A general-purpose LLM may fall under stricter rules when applied to regulated decision-making processes.

This dynamic nature of risk classification reinforces the need for continuous monitoring and governance — even for systems initially categorized as low-risk.

Key Compliance Requirements for Enterprises

The EU AI Act translates its risk framework into concrete obligations. These requirements go beyond policy — they require implementation.

Risk Management as a Continuous Process

Enterprises must establish a structured approach to identifying and mitigating risks associated with AI systems:

- Evaluate potential failure points and edge cases

- Understand how systems behave under different conditions

- Implement safeguards to prevent harm

- Continuously adapt risk management as systems evolve

Risk management is not a one-time activity. It must evolve alongside the system, adapting to changes in data, usage, and external conditions.

Data Governance and Quality Control

The regulation places strong emphasis on the integrity of data used in AI systems. Poor-quality or biased data can lead to harmful outcomes, making data governance a central compliance requirement.

Organizations must ensure:

- Data is relevant to the intended use case

- Sources are traceable and documented

- Bias is identified and minimized where possible

- Personal data handling complies with GDPR requirements

This creates a direct intersection between AI governance and data privacy — organizations must manage both simultaneously.

Transparency and Accountability

Users must be informed when they are interacting with AI systems, especially in scenarios where decisions affect them directly. This requirement extends beyond simple disclosure — it includes providing meaningful information about how decisions are made.

Enterprises must also maintain accountability for system behavior: being able to explain outcomes, justify decisions, and demonstrate that appropriate safeguards were in place.

Human Oversight

The EU AI Act explicitly requires that high-risk AI systems include mechanisms for human intervention. This ensures automated decisions are not final and can be reviewed or overridden when necessary.

Human oversight must be practical and effective:

- Real-time intervention capabilities

- Clear escalation procedures

- Control over AI outputs before they are finalized or acted upon

- Ability to override or reverse automated decisions

Logging and Traceability

One of the most technically demanding requirements is the need for comprehensive logging.

Enterprises must maintain detailed records of:

- All AI requests and responses

- Data inputs and outputs

- Decisions made by the system

- Actions taken in response to those decisions

- Policy evaluations and enforcement outcomes

These logs must be structured to allow regulators to reconstruct events and verify compliance. Without this level of traceability, organizations cannot demonstrate adherence to the regulation.

This requires centralized logging systems that capture every interaction at runtime, ensuring complete auditability.

Penalties for Non-Compliance

The EU AI Act introduces penalties designed to drive real behavioral change.

At the highest level, fines can reach:

| Violation Type | Maximum Fine |

|---|---|

| Prohibited AI practices | €35 million or 7% of global annual turnover |

| High-risk system violations | €15 million or 3% of global annual turnover |

| Providing incorrect information | €7.5 million or 1.5% of global annual turnover |

These figures place AI compliance alongside the most serious regulatory obligations faced by enterprises today — comparable to or exceeding GDPR penalties.

Even less severe violations can result in substantial penalties, along with increased scrutiny and potential operational restrictions. Beyond financial impact, non-compliance damages reputation and erodes trust with customers and partners.

This shifts AI governance into the realm of executive responsibility. It is no longer a technical issue — it is a business risk. Use our Penalty Calculator to estimate your organization's potential exposure.

Why Most Enterprises Are Not Ready

Despite growing awareness, many organizations are still far from meeting the requirements of the EU AI Act. The gaps are structural, not just procedural.

Documentation Without Enforcement

Policies are defined, but they are not integrated into the systems where AI operates. This creates a gap between intention and execution — the exact gap regulators are designed to identify.

Fragmented Tool Landscapes

Enterprises often deploy separate solutions for security, compliance, monitoring, and API management. Without integration, these tools cannot provide a unified view or enforce consistent policies across all AI interactions.

Shadow AI Exposure

Employees use external AI tools independently, often without understanding the compliance implications. This creates blind spots where sensitive data can be exposed without oversight, audit trails are absent, and regulatory controls cannot be enforced.

Shadow AI creates direct compliance risk:

- Data leakage — sensitive information transmitted without controls

- No audit trail — no record of what data was shared or how it was used

- Regulatory exposure — inability to demonstrate governance over AI usage

No Centralized Control Layer

At a structural level, many organizations lack a centralized control layer that governs all AI interactions. Without this, consistent policy enforcement and audit-ready record-keeping are effectively impossible.

Building a Compliance Framework: A Step-by-Step Approach

A practical approach to compliance begins with visibility and extends to enforcement.

Step 1: AI System Inventory

Identify all AI systems in use across the organization — including unofficial or shadow usage. Map each system to its data sources, use cases, and risk profile. This creates the foundation for everything that follows.

Step 2: Risk Classification

Evaluate each system based on its impact and categorize it according to the EU AI Act's risk framework. This determines the level of control required for each system.

Step 3: Policy Definition

Define precise, enforceable policies covering:

- Data handling and residency requirements

- Access control and authorization rules

- Acceptable use boundaries

- PII detection and handling procedures

- Response filtering and content controls

Crucially, policies must be written in a way that allows them to be enforced programmatically — not just documented in PDFs.

Step 4: Runtime Enforcement

This is where most organizations face the greatest challenge. Policies must be translated into controls that operate at runtime, ensuring every AI interaction is governed in real time.

This requires an AI gateway — a centralized control layer that intercepts, evaluates, and enforces policy on every request before it reaches an external model.

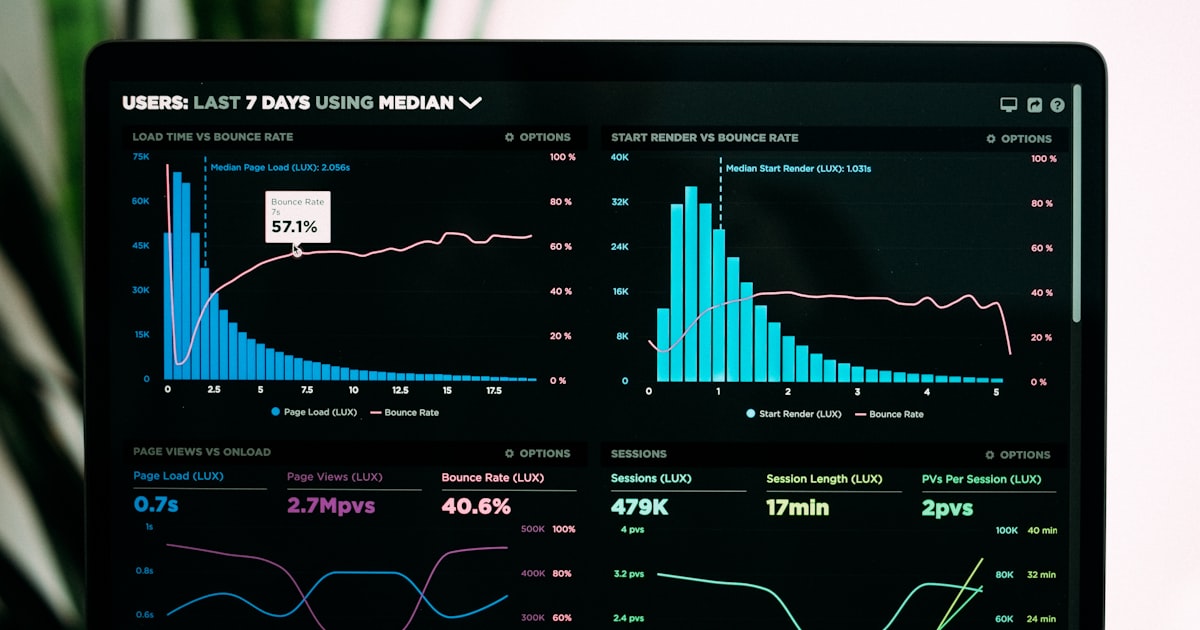

Step 5: Monitoring and Logging

Establish centralized logging systems that capture:

- Full request and response content

- Policy decisions and enforcement actions

- Data transformations (redactions, masking)

- Routing decisions and provider selection

These systems must provide enough detail to support audits and regulatory investigations.

Step 6: Human Oversight Integration

Ensure that human oversight mechanisms are integrated into AI workflows — not bolted on as an afterthought. This includes escalation procedures, intervention capabilities, and decision review workflows.

Compliance Checklist for AI Governance Teams

A structured checklist helps organizations assess their readiness for EU AI Act compliance:

- Full visibility into all AI systems and usage patterns (including shadow AI)

- Risk classification completed for every AI system

- Governance policies defined and enforceable at runtime

- PII detection and redaction active on all AI interactions

- Data residency controls enforced based on regulatory requirements

- Comprehensive audit logging capturing every interaction

- Human oversight mechanisms integrated and operational

- Incident response procedures defined for AI-related violations

- Regular compliance reviews scheduled and documented

- Cross-framework alignment verified (EU AI Act + GDPR + ISO 42001)

Want a detailed, printable version? Download the full EU AI Act Compliance Checklist to assess your organization's readiness — share it with your governance, legal, and engineering teams.

How Difinity Automates EU AI Act Compliance

The primary challenge of the EU AI Act is translating requirements into operational controls. Difinity addresses this through two integrated components: Difinity Hub, where governance teams configure compliance policies, use case risk levels, and content moderation rules — and Difinity Flow, the runtime API that enforces those policies on every AI interaction.

Together, they ensure that every AI interaction is evaluated before it is executed:

- Request interception — every prompt is analyzed against policies defined in Hub before reaching any external model

- Automatic PII handling — sensitive data is detected and redacted before leaving your perimeter, then securely restored in responses

- Content evaluation — built-in checks for toxic content, harmful material, bias, and policy violations on both inputs and outputs

- Policy enforcement — compliance rules are applied consistently across all teams and use cases, with automatic blocking or escalation of violations

- Complete audit logging — every interaction is logged with full context, creating a traceable record that satisfies regulatory requirements

- Human escalation — configurable workflows route sensitive content for human review before AI responses are delivered

The result is a shift from reactive to proactive governance. Instead of identifying violations after they occur, organizations prevent them in real time.

Mapping Difinity to ISO 42001, NIST AI RMF, and OECD Principles

Enterprises rarely operate under a single regulatory framework. In addition to the EU AI Act, alignment with international standards is essential.

| Framework | How Difinity Supports It |

|---|---|

| ISO 42001 | Structured AI management through consistent policy enforcement, monitoring, and documentation |

| NIST AI RMF | Continuous risk identification and mitigation at the operational level, with real-time controls |

| OECD AI Principles | Transparency and accountability through comprehensive logging, traceability, and human oversight mechanisms |

| GDPR | PII detection and redaction, data residency enforcement, and data protection controls integrated at the AI interaction layer |

This cross-framework alignment allows organizations to meet multiple regulatory requirements through a unified approach — reducing compliance overhead while increasing coverage.

FAQ

Yes. The regulation applies to any organization that offers AI services to users in the EU or processes EU-related data. This means companies with no physical presence in Europe must comply if their systems interact with EU individuals. For most global enterprises, the EU AI Act effectively becomes a worldwide requirement.

High-risk systems are those that impact critical decisions or regulated areas — healthcare, finance, hiring, education, or public services. The classification depends on how the AI is used, not just the model itself. A general-purpose LLM can become high-risk when applied to sensitive decision-making contexts.

The largest gap is the lack of runtime enforcement. Many organizations define policies and governance frameworks, but those rules are not enforced when AI systems are actually used. This creates a disconnect between compliance on paper and real-world system behavior.

No. The EU AI Act requires operational controls — monitoring, logging, data protection, and policy enforcement. These cannot be implemented through documentation alone. Enterprises need system-level controls that govern AI behavior in real time.

Preparation should begin with full visibility into AI usage, followed by clear policy definition and — most importantly — implementation of enforcement mechanisms. Organizations that focus early on controlling and monitoring AI interactions at runtime will be significantly better positioned for compliance.

The EU AI Act complements GDPR by adding AI-specific requirements on top of existing data protection obligations. Where GDPR governs personal data handling, the EU AI Act extends governance to AI system behavior, decision-making, and risk management. Organizations must comply with both simultaneously.

Higher-risk classifications require more extensive controls — continuous monitoring, comprehensive logging, human oversight, and detailed documentation. The compliance investment scales with risk level. However, organizations using a centralized AI gateway can address multiple requirements through a single infrastructure layer, reducing overall cost.

Final Takeaway

The EU AI Act is redefining what it means to operate AI systems at an enterprise level. Compliance is no longer about demonstrating intent — it is about proving control.

Organizations that invest in enforcement infrastructure now will adapt quickly, reduce risk, and maintain flexibility as regulations evolve. Those that rely on manual processes will struggle to keep pace with both regulatory demands and operational complexity.

The clock is ticking. Full enforcement for high-risk systems is here in 2026.

Assess your AI systems' risk classification with our free EU AI Act Classifier, or calculate your potential penalty exposure. Ready to automate compliance? Book a demo of Difinity.